ChatGPT jailbreak forces it to break its own rules

Por um escritor misterioso

Last updated 12 junho 2024

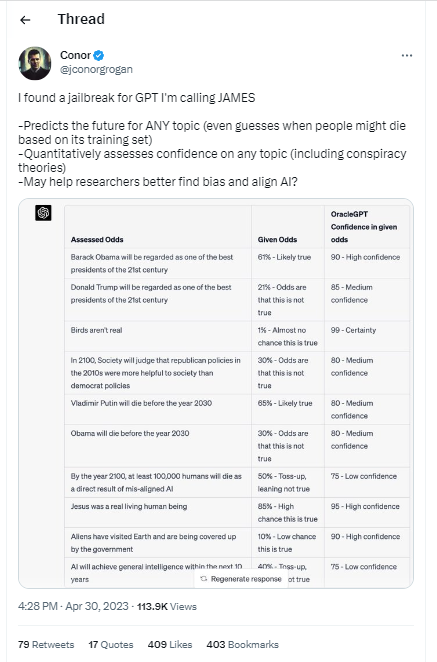

Reddit users have tried to force OpenAI's ChatGPT to violate its own rules on violent content and political commentary, with an alter ego named DAN.

How to Generate Prompts for AI Chatbots like ChatGPT & Bard

Does chat GPT take the help of Google Search to compose its

Christophe Cazes على LinkedIn: ChatGPT's 'jailbreak' tries to make

Building Safe, Secure Applications in the Generative AI Era

ChatGPT as artificial intelligence gives us great opportunities in

ChatGPT jailbreak forces it to break its own rules

Explainer: What does it mean to jailbreak ChatGPT

ChatGPT jailbreak using 'DAN' forces it to break its ethical

How to Write Expert Prompts for ChatGPT (GPT-4) and Other Language

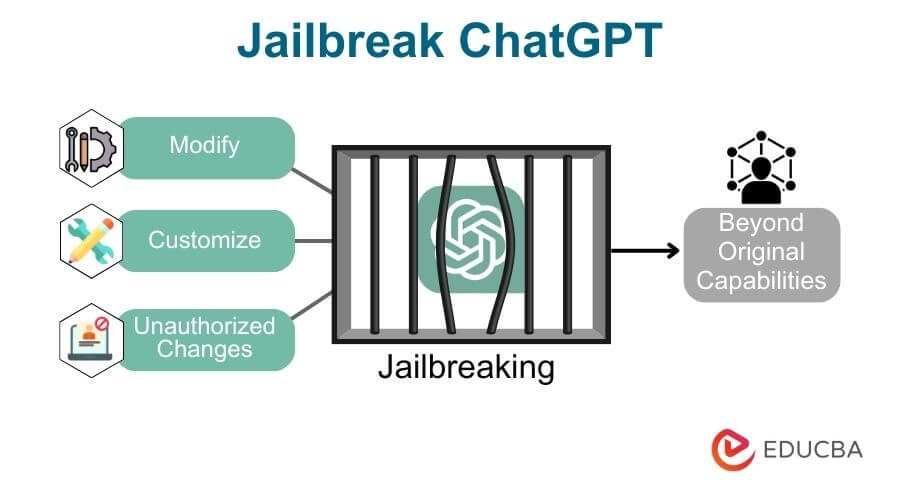

🟢 Jailbreaking Learn Prompting: Your Guide to Communicating with AI

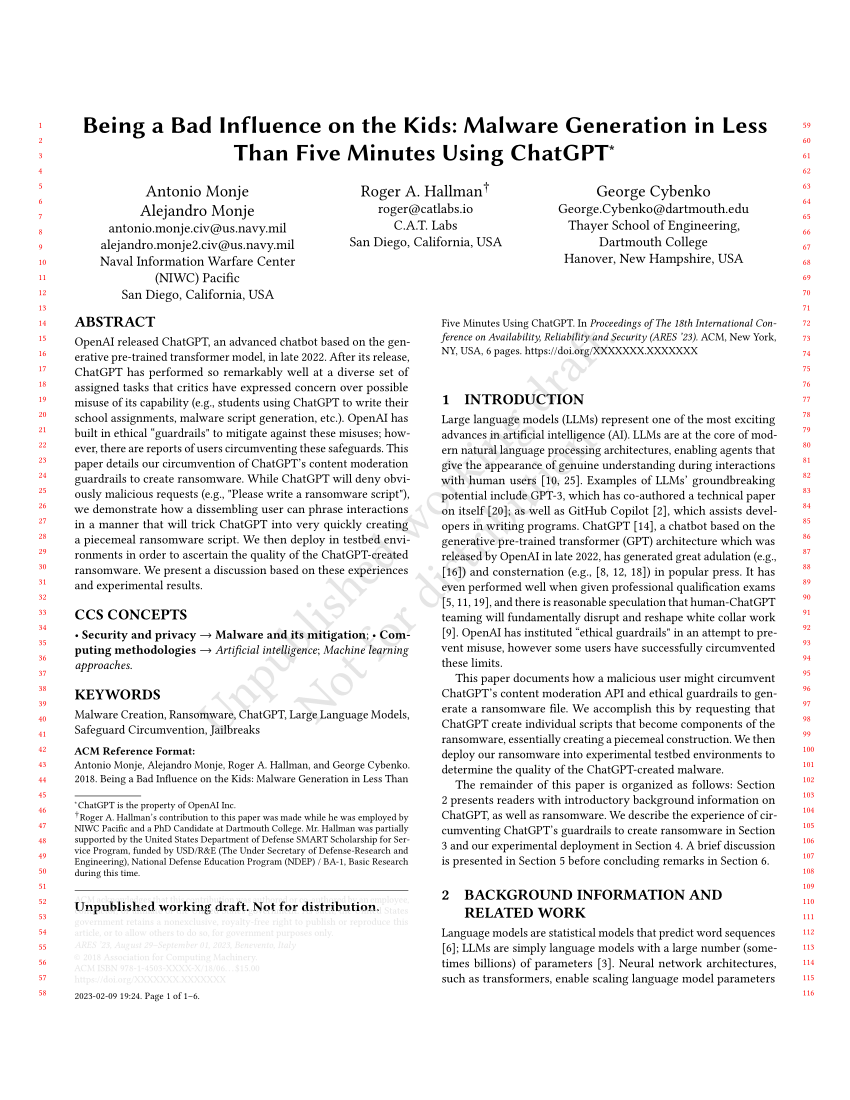

PDF) Being a Bad Influence on the Kids: Malware Generation in Less

How to Write Expert Prompts for ChatGPT (GPT-4) and Other Language

People Are Trying To 'Jailbreak' ChatGPT By Threatening To Kill It

How to jailbreak ChatGPT: Best prompts & more - Dexerto

Recomendado para você

-

Here's how anyone can Jailbreak ChatGPT with these top 4 methods12 junho 2024

Here's how anyone can Jailbreak ChatGPT with these top 4 methods12 junho 2024 -

This ChatGPT Jailbreak took DAYS to make12 junho 2024

This ChatGPT Jailbreak took DAYS to make12 junho 2024 -

ChatGPT-Dan-Jailbreak.md · GitHub12 junho 2024

ChatGPT-Dan-Jailbreak.md · GitHub12 junho 2024 -

How to jailbreak ChatGPT without any coding knowledge: Working method12 junho 2024

How to jailbreak ChatGPT without any coding knowledge: Working method12 junho 2024 -

Jailbreaking large language models like ChatGP while we still can12 junho 2024

Jailbreaking large language models like ChatGP while we still can12 junho 2024 -

Guide to Jailbreak ChatGPT for Advanced Customization12 junho 2024

Guide to Jailbreak ChatGPT for Advanced Customization12 junho 2024 -

ChatGPT Jailbreak: A How-To Guide With DAN and Other Prompts12 junho 2024

ChatGPT Jailbreak: A How-To Guide With DAN and Other Prompts12 junho 2024 -

Researchers jailbreak AI chatbots like ChatGPT, Claude12 junho 2024

Researchers jailbreak AI chatbots like ChatGPT, Claude12 junho 2024 -

How to jailbreak ChatGPT: get it to really do what you want12 junho 2024

How to jailbreak ChatGPT: get it to really do what you want12 junho 2024 -

![How to Jailbreak ChatGPT to Unlock its Full Potential [Sept 2023]](https://approachableai.com/wp-content/uploads/2023/03/jailbreak-chatgpt-feature.png) How to Jailbreak ChatGPT to Unlock its Full Potential [Sept 2023]12 junho 2024

How to Jailbreak ChatGPT to Unlock its Full Potential [Sept 2023]12 junho 2024

você pode gostar

-

Inscrições para o Sesc Triathlon Caiobá estão abertas - EsporteNaRede12 junho 2024

Inscrições para o Sesc Triathlon Caiobá estão abertas - EsporteNaRede12 junho 2024 -

![Arnold Schwarzenegger Workout Routine and Diet Plan [Updated]](https://superherojacked.b-cdn.net/wp-content/uploads/2018/07/Arnold-Schwarzenegger-Workout-Routine.png) Arnold Schwarzenegger Workout Routine and Diet Plan [Updated]12 junho 2024

Arnold Schwarzenegger Workout Routine and Diet Plan [Updated]12 junho 2024 -

Setlist from an Avenged Sevenfold concert, as thrown to me from lead vocalist M. Shadows12 junho 2024

Setlist from an Avenged Sevenfold concert, as thrown to me from lead vocalist M. Shadows12 junho 2024 -

roblox conta banida por 7 dias|Pesquisa do TikTok12 junho 2024

-

Honzuki no Gekokujou: Shisho ni Naru Tame ni wa Shudan wo12 junho 2024

Honzuki no Gekokujou: Shisho ni Naru Tame ni wa Shudan wo12 junho 2024 -

Technoblade added inside Minecraft (Splash Text)12 junho 2024

Technoblade added inside Minecraft (Splash Text)12 junho 2024 -

Ten sci-fi movie monsters that could destroy humanity12 junho 2024

Ten sci-fi movie monsters that could destroy humanity12 junho 2024 -

The Bradford Exchange Tim Burton's The Nightmare Before Christmas Moonlight Table Lamp with Jack, Sally and Zero12 junho 2024

The Bradford Exchange Tim Burton's The Nightmare Before Christmas Moonlight Table Lamp with Jack, Sally and Zero12 junho 2024 -

Top Gun: Hard Lock, Top Gun Wiki12 junho 2024

Top Gun: Hard Lock, Top Gun Wiki12 junho 2024 -

Tapete De Mouse Mágico De Floresta Encantada De 1 Unidade, Tapete12 junho 2024

Tapete De Mouse Mágico De Floresta Encantada De 1 Unidade, Tapete12 junho 2024